Introduction to MLOps: From Notebook to Production

You build a model in a notebook. It works. You get 87% accuracy. You're happy. You deploy it to production.

menu_book In this lesson expand_more

You build a model in a notebook. It works. You get 87% accuracy. You're happy. You deploy it to production.

Three months later it's performing at 64% accuracy. You didn't touch it. The data changed. The users changed. The world changed. And now your model is broken in ways that are hard to debug because production is messier than your notebook.

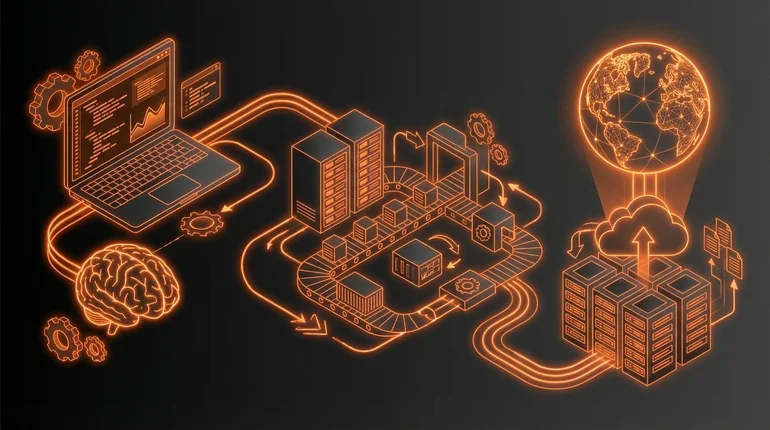

That gap - between a notebook experiment and an actual working system - is where MLOps lives.

The gap between notebook and production

A notebook is a controlled environment. You have a dataset. You split it into train and test. You train once, evaluate once, iterate. When you're done, you have a model file.

Production is chaos. Data arrives continuously. It's different from your training data. Your model makes predictions on it. Those predictions affect real decisions. Sometimes they're wrong and you need to understand why.

Your notebook didn't have to handle: new data arriving every minute, data in different formats or with different distributions, multiple models running in parallel, needing to roll back if a new model is bad, monitoring whether the model still works, retraining when performance degrades, version control for data, models, and code, compliance and audit requirements, or budget and compute constraints.

A notebook solves exactly one problem: build a model. Production needs to solve dozens more. Model evaluation is one of those problems - and it looks different in production than it does in a notebook.

What MLOps is and why it exists

MLOps is the infrastructure and practices that turn a model into a system that works reliably in production.

It's borrowed from DevOps - the practices that make software deployable and maintainable. But ML adds complexity because your system has three moving parts: code, data, and models. In traditional software, the code is the source of truth. In ML, the code matters less than the data and the model weights. Tools like MLflow and Kubeflow exist specifically to manage these three parts together.

MLOps exists because ML in production fails in different ways than regular software. A regular application can be fully tested before deployment. An ML system can never be fully tested - and that's true regardless of the framework used - you'll always encounter data distributions you didn't see in training. A regular application is deterministic - the same input always produces the same output. An ML system is probabilistic - it makes mistakes, and those mistakes change over time as data changes.

You need different tools and practices for this reality.

The key stages: data, training, deployment, monitoring

Data management. You need to know what data your model was trained on. You need to track data quality. You need to catch when the data distribution shifts. You need to version datasets so that when a model breaks, you can recreate the exact conditions that created it.

Training. You need to be able to retrain models automatically. You need to track hyperparameters and results. You need to run experiments in parallel and compare them. You need to version models and know which version is in production.

Deployment. You need to serve models with low latency and high availability. You need to be able to roll out new models gradually and roll back if something breaks. You need to handle multiple versions running simultaneously.

Monitoring. You need to track model performance on real data over time. You need to notice when performance degrades and alert the right people. You need to log predictions so you can debug failures. You need to know when to retrain.

These stages feed back into each other. Monitoring tells you when to retrain. Training produces a new model. Deployment puts it in production. Monitoring checks if it works. The cycle continues.

Why ML fails differently in production

A regular application usually fails because of bugs. The code has a flaw, you fix it, you deploy the fix. ML systems fail because the world changed.

Your model learned patterns from training data. In production, the data is different. Not in format - usually the same format. But the distribution is different. Users behave differently. Products change. Campaigns succeed or fail. The patterns the model learned aren't true any more.

This is called drift and it's inevitable. You can't prevent it. You can only detect it and handle it. The monitoring and drift lesson covers how in detail.

A regular application is also deterministic - you can test every branch. An ML model is probabilistic. You can't test every possible input. You don't know in advance what mistakes it will make. You can only observe mistakes in production and try to learn from them.

This is why monitoring matters so much in ML. You can't catch all errors before deployment. You have to catch them after and respond.

Do data scientists need to care about MLOps?

They don't need to become MLOps engineers - that's a different skill. But they should understand the basics. How models go from notebook to production. What happens when data distribution changes. How to instrument a model so it's debuggable.

The companies that are good at ML have data scientists and MLOps engineers who work together. The data scientist doesn't have to implement the monitoring system, but they should understand what needs to be monitored and why. CI/CD for ML is the next step after understanding these foundations. They should think about edge cases that might not show up in training data. They should care that their model is reliable, not just accurate.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

Why does an ML model's performance degrade over time even if the code hasn't changed?

Question 2 of 2

What makes ML systems harder to fully test before deployment compared to regular software?