Artificial Neural Networks and Forward Propagation: What's Actually Happening Inside

Neural networks look mysterious until you realise they're just a chain of arithmetic operations. Multiply. Add. Apply a function. Repeat. The mechanism is boring. The result is interesting. Here's what's actually happening.

menu_book In this lesson expand_more

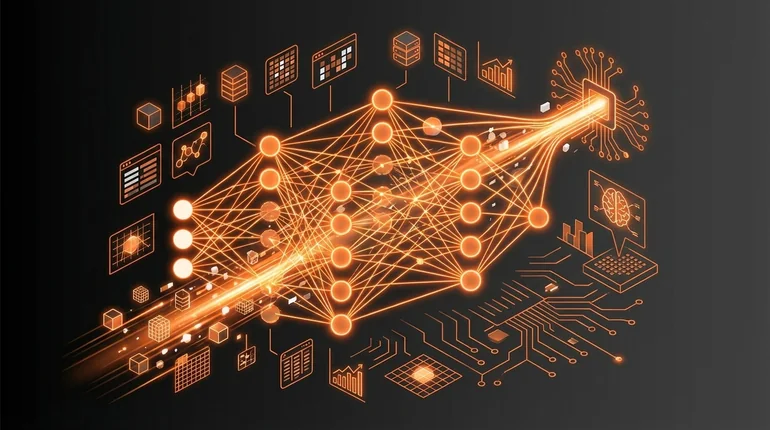

The Basic Structure of a Neural Network

A neural network has three types of layers: input, hidden, and output.

The input layer isn't really a layer of neurons - it's your data. If you're feeding in a 28x28 pixel image of a handwritten digit, that's 784 input values. The concepts here connect directly to how biological neurons differ from artificial ones.

Hidden layers do the actual computation. Each neuron in a hidden layer takes all inputs from the previous layer, multiplies each by a weight, adds them up, adds a bias, and produces a number. That number goes through an activation function. The result is what that neuron outputs.

If you have three hidden layers with 128 neurons each, you get 128 numbers flowing out of the first hidden layer, which become inputs to the second hidden layer. Same operation repeats.

The output layer is the final prediction. For recognising handwritten digits, you'd have 10 output neurons - one for each digit (0-9). Each produces a number. Usually the highest number is your prediction.

What a Neuron Does Computationally

Say a neuron has three inputs: x1, x2, x3. It has three weights: w1, w2, w3. And one bias: b.

The neuron computes:

output = activation_function((w1 * x1) + (w2 * x2) + (w3 * x3) + b)That's it. Multiply, add, apply a function. Nothing magic.

Before training, weights and biases are random. Training adjusts them so that the network's outputs match correct answers - a process called backpropagation. The weights and biases are the only things that change during training - everything else (the network architecture, the number of layers and neurons) is fixed.

What Forward Propagation Means

Forward propagation is moving data through the network from input to output.

You have some data. The input layer passes it to the first hidden layer. Each neuron in the first hidden layer does its multiply-and-add. The results flow to the second hidden layer. Same operation. Then to the third. Then to the output.

The name "forward propagation" distinguishes it from "backward propagation," where errors flow backward to adjust the weights. Forward propagation is just the straightforward path: data in, prediction out. The activation functions that shape each neuron's output are explained in the activation functions lesson.

When you give a trained network an image, you're doing forward propagation. The network outputs numbers representing the probability of each class. Pick the biggest one. That's your prediction.

Weights and Biases Explained Plainly

Weights control how much each input matters to a neuron's output.

If a weight is close to zero, that input barely influences the neuron. If it's a large positive number, the input has a big positive effect. If it's negative, that input pushes the output down.

The bias is a constant added before the activation function. It's useful because sometimes you want a neuron to have a baseline - to always fire at least a little bit, or to require a threshold before activating.

Weights let you turn inputs up or down. Bias lets you shift everything up or down. Together, they give the network enough flexibility to map any input pattern to any output.

When training is complete, the weights encode what the network has learned. Each weight is just a number, but collectively, millions of weights encode the difference between an untrained network that produces random outputs and a trained one that can recognise dogs in photos or translate between languages. Once a network is trained like this, it can be studied in the context of the broader family of ML models.

Why Neural Networks Aren't Mysterious

Neural networks sound complicated because they're described as if something sophisticated is happening. "Deep learning." "Neural architecture." "Non-linear function approximation." The jargon makes it sound profound. Wikipedia's neural network article cuts through some of that jargon well.

But it's not. It's multiply, add, apply a function, repeat. The profound part is that stacking these boring operations correctly and training them on enough data somehow produces systems that recognise faces, translate languages, and generate text. That's the interesting bit.

The mechanism isn't the magic. The result is.

People build it up as mysterious because mystery sells. Once you understand a neural network as a massive chain of arithmetic - one you can write in a hundred lines of Python - most of deep learning becomes comprehensible. Hard sometimes, but comprehensible.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

What does a single artificial neuron compute?

Question 2 of 2

What changes during neural network training?