LLMs, AI Agents and RAG: Making Sense of the AI Tools Landscape in 2026

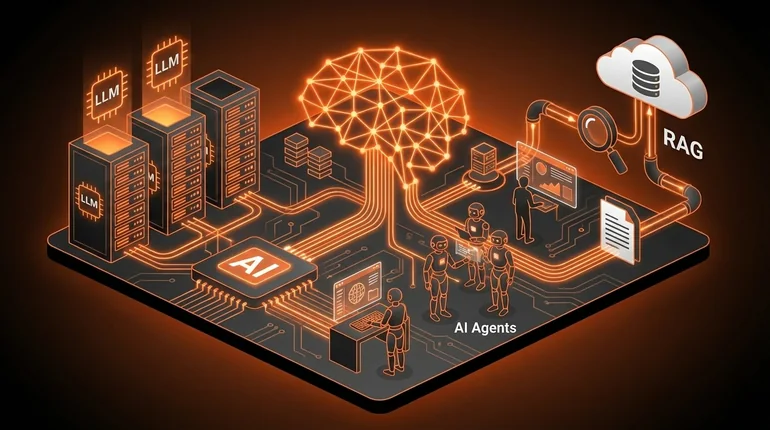

The AI tools market is fractured and moving fast. LLMs, agents, RAG - these terms get thrown around, often incorrectly. Three concepts explain almost everything: what LLMs actually do, how agents extend them, and why RAG makes them useful in production.

menu_book In this lesson expand_more

Large Language Models (LLMs) - The Foundation

An LLM is a generative neural network trained on vast amounts of text to predict the next word. That's it.

When you interact with ChatGPT, Claude, Gemini, or Llama, you're talking to an LLM. The system got fed books, websites, code repositories, research papers - billions of words. It learned statistical patterns in how language works. Give it a prompt and it predicts which words should come next, then keeps predicting until it's done.

This is why LLMs can do surprisingly well at tasks they were never explicitly trained on. They learned language deeply enough that they can approximate reasoning, coding, maths, writing, and explanation. They're not actually thinking or understanding. But the patterns they learned allow them to generate plausible text about almost anything.

The mechanism behind it: take "The cat sat on the" and ask an LLM what comes next. Based on patterns from billions of examples, it calculates that "mat" is statistically likely. Given "The cat sat on the mat," it calculates that a full stop or continuation is likely next. This all happens through matrix mathematics and attention mechanisms that let the system weigh different parts of the input when generating output.

The catch is fundamental, not fixable with more data. LLMs are predicting probable sequences - not reasoning. They hallucinate confidently. They don't know what they don't know. If an LLM generated something, there's no guarantee it's accurate. It was just the statistically likely next words.

AI Agents - Making LLMs Actually Do Things

An AI agent is an LLM connected to tools so it can take action beyond generating text.

A plain LLM can only talk to you. An AI agent can use tools: search the web, run code, access databases, send emails, check calendars. The pattern is: you give the agent a task, it decides which tool it needs, uses that tool, gets the result, then decides on the next step or reports back.

A chatbot can tell you how to book a flight. An agent can actually book it - if you give it permission.

Some real examples. An agent could read your email, identify which messages need replies, search your knowledge base for relevant context, draft responses, and wait for your approval before sending. An agent could debug code by running it, reading the error, modifying the code, running it again, and iterating. An agent could research a trip by checking flight prices, hotel availability, and reviews, then summarise options with total costs.

The challenge right now: agents aren't reliable. They can misunderstand results, use the wrong tool, or get stuck in loops. They need careful setup and human oversight. But agents are where the real utility lies. Text generation is impressive but limited. Agents that take action on real systems are useful in a different way - they save time on tasks that require multiple steps and tool use.

RAG (Retrieval-Augmented Generation)

RAG is a technique where an LLM retrieves relevant information from documents or databases before generating a response, rather than relying only on what was in its training data.

The problem RAG solves: LLMs are trained once and have a knowledge cutoff. They don't know recent events. They don't know your company's internal data. They hallucinate about both, confidently.

RAG connects the LLM to a retrieval system. When you ask a question, the system searches a database for relevant documents, feeds those to the LLM along with your question, and the LLM generates an answer based on the documents. The model can cite sources. Answers stay grounded in real content rather than statistical patterns from training.

Enterprise support systems using RAG pull relevant help articles when answering customer questions - answers are accurate and up to date. Research assistants using RAG search internal documents and databases rather than guessing. Medical systems using RAG pull current treatment guidelines rather than relying on training data that may be two years old.

This is why enterprises care: RAG makes LLMs reliable enough to use for actual business decisions. An LLM that confidently makes things up is useful for drafts. An LLM grounded in your real data is useful for operations.

The State of the AI Tools Market in 2026

OpenAI dominates consumer mindshare with ChatGPT. It's the brand people recognise. GPT-4o and later models are genuinely capable, the tooling is mature, and the ecosystem is large.

Anthropic's Claude has become the preference for many developers for reasoning tasks and long-document work. Google's Gemini is deeply integrated with Google products and strong on multimodal tasks.

Open-source models have caught up more than most expected. Meta's Llama series is freely available and competitive on many benchmarks. Mistral and others proved you don't need billions in funding to produce capable models. For many use cases, an open-source model running on your own infrastructure beats a paid API - lower cost, better privacy, no rate limits.

The agent ecosystem is still messy. Frameworks like LangChain and dozens of alternatives claim to make agents easy to build. Most are still unstable. This will consolidate over the next year or two.

RAG has become a standard pattern. Vector databases like Pinecone and Weaviate exist largely to support RAG workflows. Most enterprise AI implementations are some variation of: mainstream LLM + RAG over company data.

What Actually Matters When Choosing AI Tools

Accuracy beats features. An LLM that hallucinates eloquently is worse than one that admits uncertainty. Test the tool on your actual use case before committing.

Integration beats raw capability. A tool that connects to your existing data and systems is more valuable than a marginally better tool that's isolated. RAG only helps if you can feed it your data.

Cost scales with usage. API-based tools like OpenAI charge per token. Calculate what your costs look like at real usage volumes, not promotional pricing. Open-source models running on your own hardware have different economics that often win at scale.

Reliability matters more than cutting-edge. An agent that works 80% of the time is frustrating in production. A human-in-the-loop system that catches the 20% it gets wrong is actually useful.

My take: start with mainstream LLMs (GPT-4, Claude 3.5, Gemini Pro) for most tasks - they're expensive but well-documented and reliable. If you're building agents, start simple, define tools clearly, and keep humans in the loop. If you're deploying for enterprise, RAG plus your own data is the default path. Don't assume you need the newest, largest model. Usually you need something that works reliably on your specific problem, and that's often a smaller, cheaper model with good prompting.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

What is the key problem RAG (retrieval-augmented generation) solves?

Question 2 of 2

What makes an AI agent different from a plain LLM chatbot?