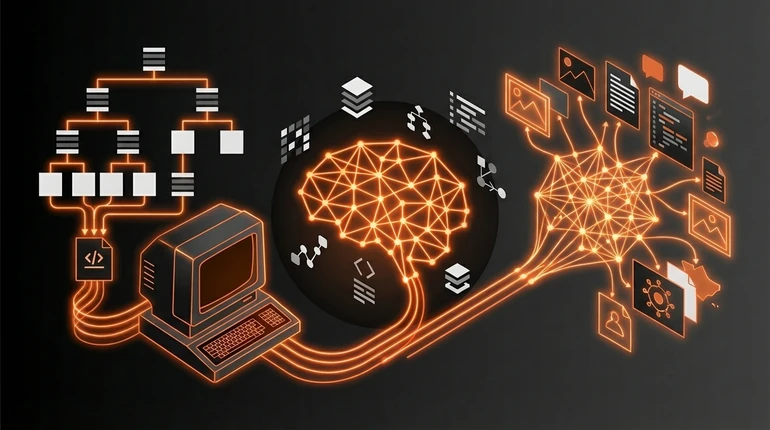

Traditional AI vs Generative AI: How They Work Differently Under the Hood

Before 2022, most AI systems did one thing: classify or predict. Generative AI changed the conversation entirely by asking a different question - can the system create something new? The distinction is architectural, not just marketing.

menu_book In this lesson expand_more

Traditional AI: Classification and Prediction

Traditional AI systems - sometimes called "rule-based AI" or "discriminative machine learning" - are built to make decisions about existing data. They don't create anything. The ML and deep learning lesson covers the technical family tree behind these systems.

A spam filter classifies emails as spam or not spam. A loan approval system predicts whether a borrower will default. A recommendation system picks which film to show you from a catalogue. These all follow the same pattern: give it an input, it processes that input through patterns it learned, it outputs a decision or prediction.

The output is always a choice from existing options or a numerical prediction. Same input generally produces the same output. Traditional AI is excellent at these tasks - spam filters work, loan models work, recommendations work well enough to keep people engaged for hours. The limit is that it can't generate anything genuinely new. It can only evaluate, classify, or predict from what already exists.

Generative AI: Creating Something That Didn't Exist Before

Generative AI flipped the approach. Instead of classifying existing things, it generates new ones: text, images, video, audio, code. The generative AI models lesson goes deep on the specific architectures that make this possible.

When you ask ChatGPT to write a poem, it's not retrieving an existing poem or combining existing poems. It's generating a new one that follows the statistical patterns of poetry it learned. That poem has never existed before. When you use an image generator to create a picture, the image doesn't exist in its training data - the system learned what makes an image look like what you described, and it generated new pixels in that pattern.

Here's what's strange: nobody fully understands why this works as well as it does. The system isn't "understanding" anything in the way humans do. It's predicting the next token - the next word, the next image patch - based on billions of learned patterns. But the output often looks like genuine creation.

Why Generative AI Changed Everything

Three reasons generative AI exploded while traditional AI stayed specialised. The AI tools and platforms lesson maps the current landscape of what's available:

It's easier to measure improvement - you just need data. Traditional AI needs labelled datasets and clear metrics. Generative AI can train on unlabelled text from the internet and improve purely by scale.

It's immediately obvious whether it's working. You don't know your spam filter is doing its job until you look in the junk folder. ChatGPT generates text you can read and judge instantly. That visibility changed public perception of what AI can do.

It's commercially broader. Traditional AI solves specific problems for specific companies. Generative AI appeared applicable to nearly everything - writing, coding, art, customer service, research. Every company saw potential applications, which drove investment and attention in a way narrow AI never did.

The Architectural Difference

Traditional machine learning models learn decision functions - they get good at drawing boundaries between categories. Given an email, they learn where the line is between spam and not spam. Transformers and autoencoders are the key generative architectures worth understanding.

Generative models learn to approximate the distribution of the training data. They learn the statistical patterns in how things are made - so they can sample from that distribution to make new things. This is why traditional AI needs labelled data while generative AI doesn't (as much): for classification you need to know the right answer; for generation you just need examples of the thing you want to generate.

It's also why generative models fail differently. Traditional models fail by being uncertain or wrong about borderline cases. Generative models fail by confidently generating plausible-sounding content that is factually wrong. If the training data contained incorrect information stated with confidence, the model will reproduce that confident incorrectness. See the lesson on hallucinations and bias for the full picture of why this matters.

When Traditional AI Still Wins

The hype around generative AI is real but sometimes misleading - it isn't better for everything.

Traditional AI is still superior when you need reliability and interpretability: a model that predicts loan defaults can show you which factors drove the decision; a generative model can't. When you need real-time low-latency decisions: a spam filter works in milliseconds with consistent results. When you need low false positive rates: in medical diagnosis, precision matters more than creativity, and traditional classification models can be tuned for that specificity far more precisely than generative models.

Generative AI is better when you need flexibility, creativity, and approximate good answers rather than precise ones. The real future probably isn't one replacing the other - it's both used for what they're actually good at. For a practical grounding in how to evaluate any model's performance, see model evaluation. You'll use generative AI to draft and explore, and traditional AI to verify and decide.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

What is the fundamental architectural difference between traditional AI and generative AI?

Question 2 of 2

For which of the following tasks is traditional AI still likely to outperform generative AI?