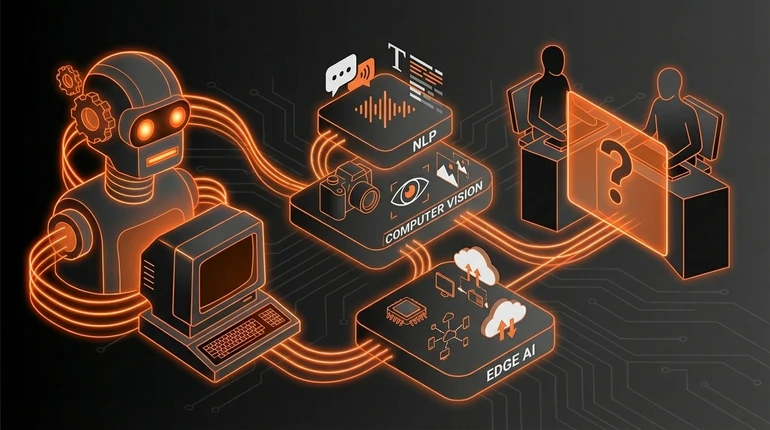

NLP, Computer Vision and Edge AI: The Key AI Subfields You Need to Know

AI isn't one thing. Different subfields tackle completely different types of problems. Three of them are everywhere right now: NLP for language, computer vision for images, and edge AI for on-device processing. Each has different strengths, limits, and applications.

menu_book In this lesson expand_more

Natural Language Processing (NLP)

NLP is the field that handles human language - text, speech, conversation. Everything that involves words.

It produced ChatGPT, Copilot, and every text-based AI tool you've used. Translation, question answering, summarisation, text generation, spam filtering, information extraction - all NLP. The Wikipedia article on natural language processing gives a good overview of the field's history.

Some real examples. Gmail's Smart Reply suggests email responses by predicting what most people say in reply to common email types. Search engines understand awkward phrasing because NLP parses intent, not just keywords. Medical systems scan research papers and extract relevant findings at scale. Voice assistants work because speech recognition converts audio to text, then NLP understands it.

The important limit: NLP systems are good at pattern matching in language but poor at understanding meaning or truth. ChatGPT can write essays about topics it doesn't comprehend. The transformers lesson explains the architecture behind these systems. It confidently produces false information. It can't tell accurate sources from fiction if both appeared in its training data. That's not a bug waiting to be fixed - it's a consequence of how these systems work.

NLP also skews heavily towards English. Systems for other languages exist but are generally less capable because they were trained on less data.

Computer Vision

Computer vision is the field that handles images and video - the systems that let AI see.

In some ways it's the more mathematically mature field. An image is just a grid of numbers. You can apply operations to those numbers to find patterns, and the mathematics for doing so are well established.

Computer vision systems can identify objects in images, detect and analyse faces, extract text from photos, segment images into regions, estimate 3D structure from 2D images, and track movement in video. The main architecture behind this is convolutional neural networks.

Where it shows up: autonomous vehicles use it to see roads, pedestrians, and other cars under varying light and weather. Medical imaging systems flag abnormalities in X-rays and MRI scans for doctors to review. Retailers monitor empty shelves. Amazon's cashier-less stores track what customers pick up. Manufacturing plants inspect products for defects faster and more consistently than humans.

The limits matter. Computer vision is reliable for images that resemble its training data. Unusual angles, poor lighting, or object types it hasn't seen cause failures. It's computationally expensive. And it's brittle in a specific way: adversarial examples - images with tiny deliberate modifications invisible to humans - can completely fool these systems while appearing unchanged to us. This is one of several limitations explored in ML and deep learning.

Edge AI

Edge AI means running AI on devices rather than in the cloud. Instead of sending data to a server, the device handles processing locally. Getting AI onto devices efficiently requires understanding agent architectures as well as model compression.

It gets less attention than NLP or computer vision but it's increasingly relevant for real applications.

Cloud processing has real costs and risks. You have to transmit data somewhere. You're dependent on connectivity. You pay for bandwidth. If the internet goes down, the system stops. Edge AI cuts these dependencies.

The face recognition that unlocks your phone is edge AI - your face isn't sent to Apple's servers. Fitness watches that detect walking or running analyse motion data on the device itself. Industrial sensors spot equipment faults locally without uploading all sensor data to the cloud. Hearing aids filter background noise in real-time using on-board processing.

The challenge is hardware constraints. You can't run a model with 100 billion parameters on a phone. Edge AI requires smaller, more specialised models, and smaller models generally perform less well. Researchers are working on efficient architectures, quantisation techniques, and dedicated hardware accelerators because the payoff - AI everywhere, independent of cloud - is substantial.

Which Subfield Will Have the Most Impact?

NLP gets the most attention. Computer vision is already embedded in real systems at scale. But edge AI is the one worth watching. For a broader picture of what's available today, the AI tools and platforms lesson covers the major players across all subfields.

NLP's primary impact so far is cultural - writing, chatbots, search. Computer vision is industrial and deployed in healthcare, manufacturing, and retail. Edge AI, once the hardware and efficiency problems are solved, puts AI capabilities into every phone, watch, car, and industrial sensor on earth - without cloud dependency.

That's a different order of magnitude. Right now, edge AI is the bottleneck. The models are improving but still too large for most devices. Once that's cracked, you get persistent, local intelligence everywhere. That matters more than whether the latest chatbot can hold a better conversation.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

What is a key limitation of NLP systems like ChatGPT?

Question 2 of 2

What is the main challenge holding back wider deployment of edge AI?