Backpropagation: How Neural Networks Actually Learn

Forward propagation gets you a prediction. Backpropagation gets you from "that prediction was wrong" to "here's how to adjust every weight." It's the chain rule from calculus, applied over and over. Here's what's actually happening.

menu_book In this lesson expand_more

The Problem Backpropagation Is Solving

After forward propagation, you have a prediction. Usually it's wrong. You measure how wrong using a loss function - a number representing how bad the mistake was.

Now comes the hard part. You have thousands or millions of weights. Which ones caused the mistake? How much should you change each one? Moving weights in the wrong direction would make things worse.

You could try random adjustments, but that's incredibly inefficient with millions of weights. You need to know: for each weight, if I increase it slightly, does the loss go up or down? By how much?

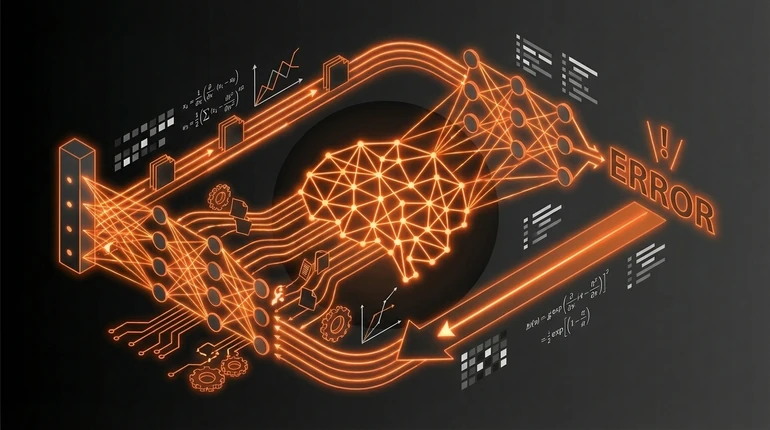

Backpropagation solves this. It calculates the gradient - the direction and magnitude of change - for every single weight in the network, efficiently, in one pass backward through the network.

Without backpropagation, training deep networks would be computationally impossible. That's why when people adopted it in the 1980s, it was a genuine breakthrough.

The Chain Rule Without Heavy Calculus

Backpropagation is the chain rule from calculus, applied over and over.

The chain rule says: if you have a function made up of functions stacked together, the rate of change of the output depends on the rate of change at each step, multiplied together.

Simple case: the output of neuron C depends on the output of neuron B, which depends on the output of neuron A. How much does A affect C? Multiply (how much does B change when A changes) by (how much does C change when B changes). That product is your answer.

In a neural network, you have hundreds of these chains. The loss depends on the output layer, which depends on the previous layer, which depends on the one before, all the way back to the inputs. Backpropagation computes all of these multiplications together, starting from the loss and working backward. At each step it asks: "How much should we blame this weight for the error?" That blame is the gradient.

How Errors Flow Backward Through the Network

Here's the flow:

- Make a prediction (forward pass)

- Measure the loss - how wrong you were

- Compute the gradient of the loss with respect to the output layer weights - straightforward calculus, the output is right there

- Work backward: using the chain rule, compute how the loss changes with respect to the previous layer's weights

- Keep going backward, layer by layer

- Once you have gradients for every weight, update them: move each weight slightly in the direction opposite to its gradient (this is gradient descent)

The beautiful part is that you can do this for the entire network in a single backward pass. The chain rule lets you reuse computations from each layer as you move backward, rather than computing each weight's gradient independently. This efficiency is why backpropagation works for networks with millions of weights.

Why This Was a Breakthrough

Backpropagation was discovered before the 1980s, but only became widely used then. That's when people realised: with this, we can train networks with multiple hidden layers using activation functions like sigmoid, and they work better than shallow ones.

Before it became standard, training deep networks meant guessing which weights to adjust and by how much. Results were poor. Backpropagation gave a systematic, efficient method to improve every weight in the right direction. Deep networks became trainable.

In the 1980s and 1990s, this drove a period of excitement about neural networks. But computers weren't fast enough yet, and data was scarce. Deep learning went quiet again until GPUs made the computation feasible around 2012. Backpropagation plus stochastic gradient descent plus large datasets: that combination is what made modern deep learning possible.

Conceptually, what you need to understand: start from the loss. Move backward through the network. At each step, use the chain rule to figure out how much each weight is responsible for the error. Update weights to reduce that error. That's most of what you need to know to work with neural networks. The calculus gets involved when you implement it, but the concept is about blame and feedback.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

What does backpropagation calculate?

Question 2 of 2

What mathematical principle does backpropagation apply?