Linear Regression and Gradient Descent: The Foundation of Machine Learning Explained

Linear regression is the simplest machine learning model. But the ideas behind it - loss functions, gradient descent, convergence - are the same ideas behind every other model. Getting this right makes everything else easier.

menu_book In this lesson expand_more

What Linear Regression Is and What It's Trying to Do

Linear regression is the simplest machine learning model. It draws a line through data.

You have data points. Each has an input (x) and an output (y): house size and price, years of experience and salary, temperature and ice cream sales. The model's job is to find the line that best predicts y from x. Once you have it, give it any x and it predicts y.

Why start here? Because it's interpretable - you can understand why it made a prediction. It's fast. It works well when the relationship is actually linear. And the concepts transfer to every other model: deep learning, random forests, neural networks all try to find a function that maps inputs to outputs. Linear regression is the simplest function. Everything else is more complicated.

The Line of Best Fit Without Heavy Maths

You define "distance" mathematically. If the line predicts y_predicted and the actual value is y_actual, the error is the difference. You can't just add errors up - positives and negatives would cancel. So you square them (squared error is always positive) and add them all up. This is mean squared error (MSE).

The computer's job: find the line that minimises MSE. A line is defined by slope (m) and intercept (b): y = mx + b. The search is for values of m and b that make MSE as small as possible. That search is gradient descent. Scikit-learn's linear model docs show how this looks in practice.

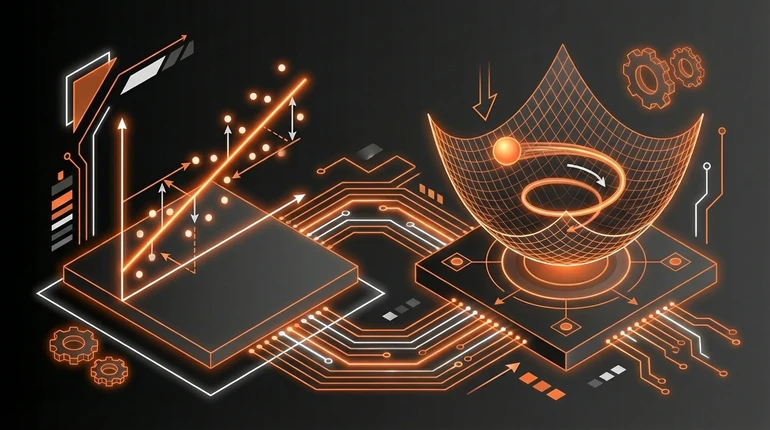

What Gradient Descent Is: The Hill-Walking Analogy

Imagine you're lost in fog on a hill. You can't see the bottom, but you can feel the slope under your feet. If you always walk downhill, you'll eventually reach the bottom. Gradient descent is that algorithm.

Start with random values for m and b. Compute the error. Ask: if I change m slightly, does the error get smaller or bigger? If smaller, move that way. Adjust m and b a little. Ask again. Keep doing this - always moving in the direction of smaller error - until moving in any direction makes things worse. That's the bottom. That's your best fit.

The gradient is the slope of the error surface. The direction the gradient points is where error increases fastest. Moving opposite to the gradient means moving towards lower error.

The learning rate controls step size. Big steps are fast but risky - you might overshoot. Small steps are slow but safe. You pick a learning rate and tune it experimentally.

How Gradient Descent Finds the Best Model

In practice:

- Start with random m and b

- Compute the error (MSE)

- Compute the gradient - the direction to change m and b to reduce error

- Take a small step in that direction

- Repeat hundreds or thousands of times

After many iterations, m and b stop changing meaningfully. You've converged. That's your trained model.

This is the basic training loop for almost every machine learning model. Neural networks have way more parameters to adjust, but the idea is identical: define what "good" means (loss function), compute the gradient, take steps to reduce loss. The backpropagation lesson extends this to deep networks.

For linear regression, the mathematics guarantees the error surface is bowl-shaped - convex, with one minimum. Gradient descent finds it reliably. For more complex models the surface can be bumpier, which is why training neural networks is more involved.

Why This Matters as a Foundation for Everything Else

Every model you build later has the same structure: a loss function (how to measure wrong), parameters to adjust, an optimisation algorithm (usually gradient descent or a variant), and validation to check whether the model generalises. See model evaluation for how to do that validation properly.

In logistic regression, you're still minimising a loss function with gradient descent. In neural networks, same thing - just with way more parameters. In random forests, you're still measuring how well predictions match reality.

Understanding linear regression gives you intuition for why those models work. And it's often the right tool. When you have a big complicated dataset with fancy models available, sometimes the simplest thing that works is a linear regression. Compare it to the full range of ML algorithms to see where it fits. It's interpretable, fast, and deployable. If a deep learning model gets 2% higher accuracy but you can't explain its predictions, the linear regression might be the better choice.

You don't need to derive gradient formulas by hand. But you should understand what a loss function is, why you minimise it, how gradient descent navigates the error surface, and what convergence means. That understanding is the difference between calling fit() as magic and actually being able to debug when training goes wrong.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

What does gradient descent do during model training?

Question 2 of 2

Why is the learning rate important in gradient descent?