Logistic Regression and Classification: How Machines Learn to Make Decisions

Logistic regression isn't regression - it's classification. The name is a historical accident. It predicts probabilities rather than numbers, and it's faster and more interpretable than almost anything else. It's also chronically underused.

menu_book In this lesson expand_more

Regression Predicts Numbers, Classification Predicts Categories

Regression predicts a continuous number. Given a house's size, predict its price. Given an animal's age, predict its weight. The output is a real number on a number line.

Classification predicts a category. Given an email, is it spam or not? Given an image, is it a dog or a cat? The output is one of a discrete set of possibilities.

Linear regression predicts numbers. Logistic regression predicts categories. The names are similar - confusingly so. "Logistic regression" isn't regression; it's classification. Someone named it regression because it borrows the regression framework. It stuck.

You can't just use linear regression for classification. If you train it to predict whether an email is spam (0 for not spam, 1 for spam), it'll happily predict 2.5 for some emails. That doesn't make sense. An email is either spam or it isn't. Logistic regression fixes that.

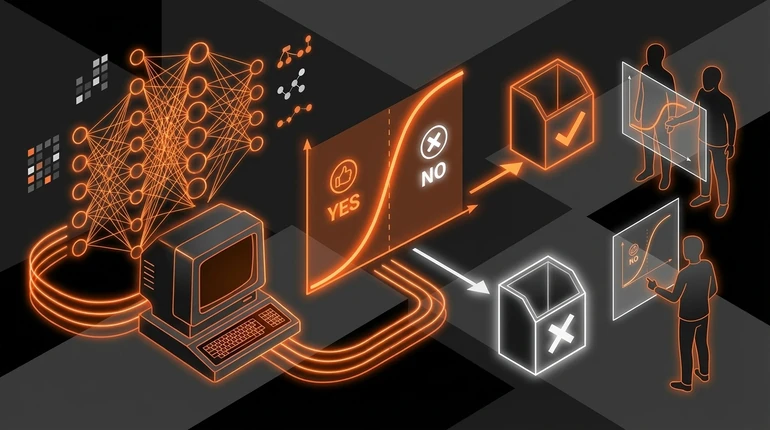

What Logistic Regression Does

Logistic regression outputs a probability. Instead of predicting "spam" or "not spam," it predicts "99% chance this is spam" or "5% chance this is spam."

The maths transforms the linear regression output (which can be any number) into a probability between 0 and 1. Then you set a threshold. Probabilities above 0.5? Predict the positive class. Below 0.5? Predict the negative class.

Probabilities are more informative than hard decisions. If the model says "87% chance spam," you're confident. If it says "51% chance spam," you're less sure. You can adjust your threshold based on the cost of being wrong. If false positives are expensive, only mark emails as spam at 95% or higher. If missing spam is more costly, lower the threshold to 30%.

Training is the same process as linear regression: gradient descent minimising a loss function. The loss function is different - cross-entropy loss rather than mean squared error - but the training loop is identical.

The Sigmoid Function

The sigmoid function is the mathematical transformation that turns any number into a probability between 0 and 1. Very negative inputs map close to 0. Very positive inputs map close to 1. Zero maps to 0.5. It produces an S-shaped curve.

You don't need to memorise the formula. Intuitively: data points from two classes are scattered on a 2D plot. Linear regression draws a straight line through the middle. Logistic regression bends that line into a curve where the probability transitions smoothly from "mostly class A" to "mostly class B."

The sigmoid constrains outputs to the valid range for probabilities. That's the whole job.

Real Examples of Classification Problems

Email filtering: spam or not spam. Binary classification (two classes). This is the classic classification problem, covered in the context of how AI learns.

Medical diagnosis: does the patient have disease X? Doctors sometimes want probabilities rather than hard decisions: "85% confident this is pneumonia." That maps directly to logistic regression output.

Credit approval: approve or deny. Companies often output a score or probability rather than a hard yes/no, so humans can review borderline cases. Scikit-learn's logistic regression docs show how this is implemented in practice.

Image recognition: is this a cat, dog, bird, or fish? Multi-class classification. Logistic regression extends to this with softmax - a generalisation of sigmoid that produces probabilities across multiple classes simultaneously.

Fraud detection: is this transaction fraudulent? Binary classification where both false positives (blocking legitimate transactions) and false negatives (missing fraud) are costly. Adjusting the decision threshold lets you control the balance between them.

All of these share the same structure: input data, model predicts probability, apply a threshold, make a decision.

When Logistic Regression Beats Fancier Models

Logistic regression is underrated. People jump to neural networks without trying it first. That's a mistake.

It wins when the problem is approximately linearly separable - when the two classes naturally separate with a linear boundary. Adding complexity doesn't help. It wins with limited data: simpler models generalise better on small datasets, where a fancy model would overfit - as the model evaluation lesson explains. It wins when you need explainability: you can look at the trained weights and understand exactly which features push predictions in which direction. Try explaining that with a deep neural network. And it wins on speed: logistic regression makes predictions in microseconds.

When does it lose? When the decision boundary is complicated - curves in ways a linear boundary can't capture. Random forests and neural networks model those curves. Logistic regression can't.

My advice: always try logistic regression first. It's your baseline. If it solves the problem, use it. If not, understand why - is it accuracy, speed, or something else? Then choose the next model based on that gap, not based on what sounds impressive. The key ML algorithms lesson covers what to try next.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

What does logistic regression output, and why is that useful?

Question 2 of 2

In which situations does logistic regression typically beat more complex models like neural networks?