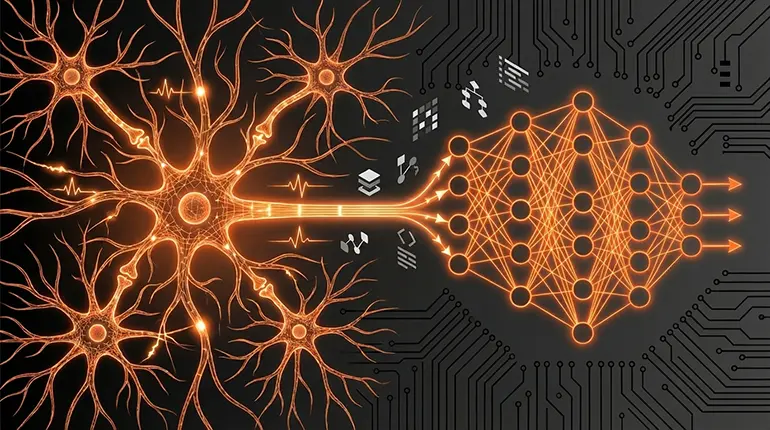

Biological vs Artificial Neurons: How the Brain Inspired Deep Learning

The brain is a terrible metaphor for what deep learning actually does - and that's exactly why it works so well as a starting point. Here's what biological neurons actually do, what artificial ones borrowed, and when to drop the analogy entirely.

menu_book In this lesson expand_more

The brain is a terrible metaphor for what deep learning actually does. That's not a reason to avoid it - it's a reason to use it carefully and know exactly when to drop it.

How Biological Neurons Actually Work

A neuron in your brain is a cell that receives signals from other neurons through connections called synapses. When enough signals arrive, the neuron fires - it sends an electrical pulse down its axon to connected neurons. The strength of each connection depends on neurotransmitters and a process called long-term potentiation, where connections get stronger or weaker based on repeated activity.

Here's what actually matters: a neuron doesn't compute. It fires or doesn't fire. The "learning" happens when connections between neurons strengthen or weaken based on repeated patterns. This is partly what we call synaptic plasticity.

That's the biological side. It's not really about maths. It's about chemical and electrical signals changing over time. There's a time dimension - neurons fire in sequences, patterns emerge over milliseconds - that has no direct equivalent in most artificial systems.

What Artificial Neurons Borrowed

Artificial neurons take one key idea from biology: a unit receives multiple inputs, processes them, and produces an output. That part is accurate. Where it diverges immediately is in how it processes.

An artificial neuron multiplies each input by a number called a weight, adds all those products together, adds another number called a bias, and then applies a function to that sum. That's it. Multiplication and addition. No chemistry, no timing, no physical firing.

The weights in an artificial network play a similar role to synaptic strength - they control how much each input matters to the output. But artificial neurons don't have the time dimension that real neurons do. They don't fire over time. They take inputs in and output a number, that's the entire operation.

See Lesson 19 for exactly how this arithmetic chains together across layers to produce a full neural network prediction.

Why the Analogy Is Useful but Misleading

The analogy helps because it gives you permission to think of networks as learning systems that improve without explicit programming. That's true for both brains and neural networks, even though the mechanisms are completely different. If you're new to the field, that framing is helpful - it makes the idea feel less arbitrary.

The problem is that people then think: deeper networks must be smarter, more connections must be better, and mimicking neural biology more closely must produce better results. None of that follows from the analogy, and none of it is true in practice.

The analogy breaks down the moment you need to understand why something doesn't work. When a network trains poorly, it's not because the "neurons are firing the wrong way." It's because the maths is set up wrong - the loss function is mismatched, gradients are vanishing, the learning rate is too high. You need to think mathematically to fix it, not biologically. The brain metaphor points you at the wrong level of abstraction.

Does the Brain Analogy Help or Hurt Beginners?

It helps initially - it makes the idea seem less arbitrary and gives you a way to talk about "learning" that feels intuitive. Then it actively hurts.

Once you start building networks, you need to let go of the brain metaphor and think about gradients, backpropagation, loss functions, and matrix operations. Holding on to "this is basically a brain" will make you miss the actual principles that make deep learning work. You'll ask the wrong questions and reach for the wrong explanations.

The best approach: use the brain analogy to motivate why we might want systems that improve from examples without explicit rules. Then immediately drop it and learn the actual maths. The sooner you stop thinking of artificial neurons as biological neurons, the faster you'll understand what's actually happening when a network trains.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

What operation does an artificial neuron perform on its inputs?

Question 2 of 2

According to the lesson, when does the brain analogy start actively hurting your understanding of deep learning?