Activation Functions and the Vanishing Gradient Problem Explained for Beginners

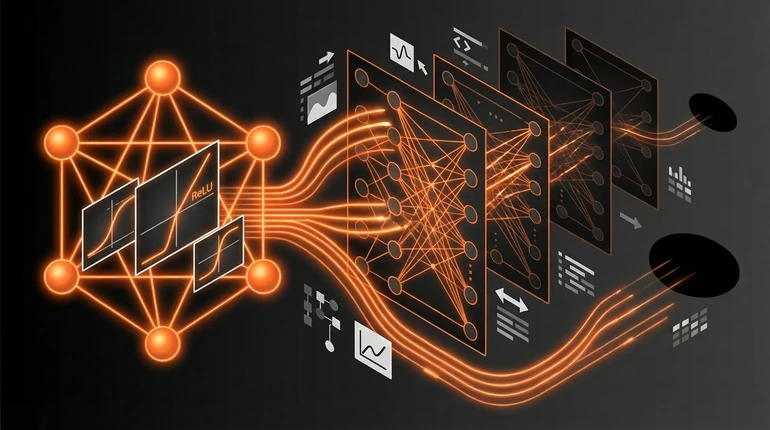

A neuron that just multiplies and adds is useless. Activation functions are what make neural networks actually work. And the choice of activation function once made training deep networks practically impossible - until ReLU changed everything.

menu_book In this lesson expand_more

What Activation Functions Do and Why They're Needed

Without an activation function, a neural network is just a very complicated way to do linear maths. Layer one does linear operations. Layer two combines those with more linear operations. Layer three combines those. But linear combined with linear is still linear - regardless of how many layers you stack.

Linear operations can't learn interesting patterns. They can't recognise faces in photos, understand language, or find non-obvious relationships in data. You need non-linearity.

An activation function adds non-linearity. It's a simple function applied after each neuron's multiply-and-add step. It squashes, transforms, or filters the output.

So the neuron operation becomes: apply the activation function to (weights times inputs plus bias). This non-linearity lets the network learn complex, curved relationships between inputs and outputs instead of just straight lines.

The Main Activation Functions

Sigmoid was the classic. It takes any number and squashes it to a value between 0 and 1. Smooth, with useful mathematical properties. The problem: it's almost flat at the extremes. Very high or very low inputs produce near-zero gradients. This is what causes the vanishing gradient problem.

Tanh is similar but squashes to between -1 and 1. It's centred at zero, which helps training slightly compared to sigmoid. But it has the same vanishing gradient problem at the extremes.

ReLU (rectified linear unit) is simple: if the input is positive, output it unchanged. If it's negative, output zero. Not smooth, but extremely effective. It doesn't flatten at the extremes - it's either zero or linear. No vanishing gradient.

ReLU became the standard for hidden layers because it's computationally cheap and it works. Sigmoid is still used for output layers in binary classification because its 0-1 range gives a probability. There are ReLU variants - Leaky ReLU, ELU, GELU - but they're refinements. ReLU was the breakthrough that mattered.

The Vanishing Gradient Problem

When you backpropagate through sigmoid or tanh, you multiply gradients at each layer. The gradient of sigmoid in its flat regions is very small - close to zero. Multiply many small numbers together and you get something extremely small.

Multiply 0.1 by itself ten times: you get 0.0000000001. That's the size of the gradient signal reaching the early layers. The update to each weight is proportional to its gradient, so weights in early layers barely change. Those layers essentially stop learning.

This is the vanishing gradient problem. Deep networks with sigmoid activations couldn't train effectively because the first layers received negligible gradient signals. The network would train fine near the output but be nearly random near the input.

This was a fundamental barrier to deep learning. Networks with more than a few layers couldn't be trained reliably.

How ReLU Solved It

ReLU's gradient is either 0 (for negative inputs) or 1 (for positive inputs). You don't get multiplying tiny fractions. Gradients pass through ReLU unchanged (for positive activations) or are blocked entirely. No vanishing.

This meant you could train neural networks with 50, 100, or more layers. Gradients reached the early layers strongly enough to actually update those weights. The early layers learned. Deep networks became trainable.

ReLU also helped on speed. Computing sigmoid requires an exponential function. Computing ReLU is a comparison: is this number greater than zero? That's one of the cheapest operations a processor can do. Large networks with many neurons suddenly became much more computationally feasible.

When deep learning took off around 2012, ReLU was standard. Most of the architectures that followed - ResNets, transformers, the models behind ChatGPT and image generation - use ReLU or one of its variants as the hidden layer activation.

The practical takeaway is simple: use ReLU for hidden layers. It works, it's fast, and the reasons it's better than sigmoid are now historical. You'll encounter sigmoid in output layers for binary classification problems, and softmax for multi-class outputs. But for hidden layers, ReLU is the default.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

Why do neural networks need activation functions?

Question 2 of 2

Why did ReLU solve the vanishing gradient problem?