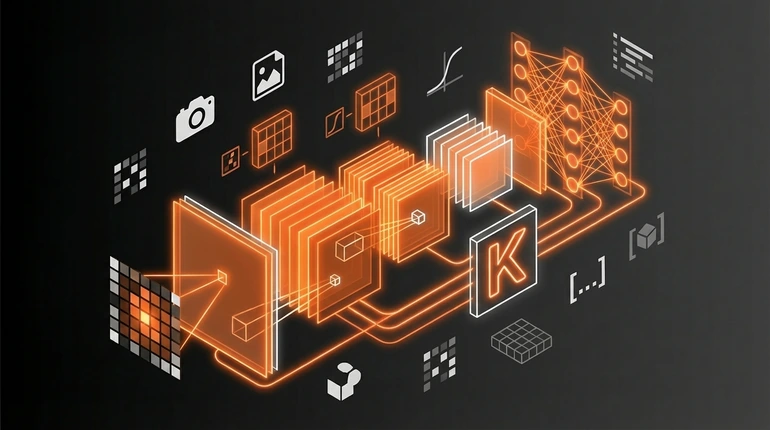

Convolutional Neural Networks (CNNs) with Keras

CNNs were the first architecture that made computer vision actually work. Before them, machines couldn't recognise images reliably. After them, they could outperform humans. That shift mattered.

menu_book In this lesson expand_more

CNNs were the first architecture that made computer vision actually work. Before them, machines couldn't recognise images reliably. After them, they could outperform humans. That shift mattered.

What a CNN is trying to do

You have an image - pixels in a grid. You want to know what it contains. Is it a cat? A dog? A car?

A regular neural network would treat each pixel as a separate input. For a 28x28 image, that's 784 inputs. For a 256x256 image, it's 65,536 inputs. And the network has to learn independently whether pixel 5 relates to pixel 6, whether pixel 142 is important, and so on.

A CNN does something smarter. It assumes that nearby pixels relate to each other, and that the same pattern - like a cat's whisker - might appear in different parts of the image. It looks for small patterns first, then combinations of patterns, then more complex shapes.

It builds understanding from simple to complex, which matches how we actually see. We don't recognise a cat by analysing individual pixels. We recognise edges, then fur texture, then cat-shaped silhouettes, then "that's a cat."

Convolutional layers - what they're detecting

A convolutional layer applies small filters across the image. A filter might be 3x3 or 5x5 pixels. It slides across the image, and at each position it computes a dot product with the image patch beneath it.

What's the filter detecting? Early on, simple patterns: edges, vertical lines, diagonal lines, corners. Later layers take the output from early layers as input and detect more complex patterns - textures, shapes, parts of objects.

You don't hardcode these filters. The network learns them during training, adjusting them so they detect features useful for whatever task you're solving.

The output of a convolutional layer is a set of feature maps - one for each filter. If you have 32 filters, you get 32 feature maps. Each represents where that filter's pattern shows up across the image.

Pooling layers - why they're there

After a convolutional layer, you often have a pooling layer. The most common is max pooling. It takes small regions - like 2x2 - and outputs only the maximum value.

This does two things. First, it shrinks the feature maps, reducing computation. Second, it makes the network tolerant to small shifts. If a pattern moves one pixel, max pooling usually still catches it.

Pooling says: we detected this feature somewhere in this region - we don't care exactly where. That's useful because a cat's eyes appear in different positions in different photos, but we still want to recognise it as a cat.

You don't always need pooling. Modern networks sometimes skip it. But it's standard because it works and it's efficient.

Building a simple CNN in Keras

Here's the structure of a basic CNN in Keras for image classification. Seven layers total: two convolution-pooling pairs, a flatten, a dense layer, and an output.

model = Sequential([

Conv2D(32, 3, activation='relu', input_shape=(28, 28, 1)),

MaxPooling2D(2),

Conv2D(64, 3, activation='relu'),

MaxPooling2D(2),

Flatten(),

Dense(128, activation='relu'),

Dense(10, activation='softmax')

])The first convolutional layer uses 32 filters of size 3x3, detecting simple patterns. Max pooling shrinks the feature maps. The second convolutional layer uses 64 filters and detects more complex patterns built on the first. Another round of max pooling. Then Flatten converts the feature maps into a 1D vector, the Dense layer learns combinations of features, and the output layer gives probabilities across 10 categories.

You compile with an optimiser and loss function using the Keras API, then fit it to image data. The network learns which filters and weights work best for recognising whatever you're training it on. That's the entire idea: stack convolutions and pooling, then fully connected layers at the end.

Where CNNs are still the right tool

CNNs are excellent for computer vision tasks including image classification and object detection. They're efficient, they learn good features, and they work well on reasonable-sized datasets.

But they're not the only option any more. Vision Transformers (ViTs) apply the transformer architecture to images and are competitive with CNNs - sometimes better, especially with lots of data. For most state-of-the-art vision research in the last few years, Transformers have taken over.

So why still learn CNNs? Because they're more efficient with small datasets, simpler to understand, and the intuition - convolutions detect patterns - is valuable for understanding any vision model. For many practical applications, a CNN works fine. You probably don't need cutting-edge.

If you're building something with massive training data and unlimited compute, maybe reach for a Vision Transformer. If you're learning, or if you have reasonable data and want to train fast, CNNs are still the right choice. Transfer learning from models pre-trained on ImageNet makes this even faster. They're not obsolete. They've just been supplemented by approaches that sometimes work better.

Check your understanding

2 questions - select an answer then check it

Question 1 of 2

What does max pooling do in a CNN?

Question 2 of 2

Why are CNNs still worth learning when Vision Transformers exist?