AI Terms Explained: A Beginner's Guide to LLMs, ML, and Every Buzzword in Between

Confused by AI jargon? This plain-English guide explains the difference between AI, ML, LLMs, deep learning, and every other term you keep hearing - in the right order, so each definition builds on the last.

menu_book In this article expand_more

If you've tried to read about artificial intelligence recently, you've probably hit a wall of acronyms within the first paragraph. AI. ML. LLM. NLP. RAG. It sounds like alphabet soup, and most articles assume you already know what these things mean.

This guide doesn't. We'll start from scratch and explain every major term in plain English, in the right order, so each definition builds on the last.

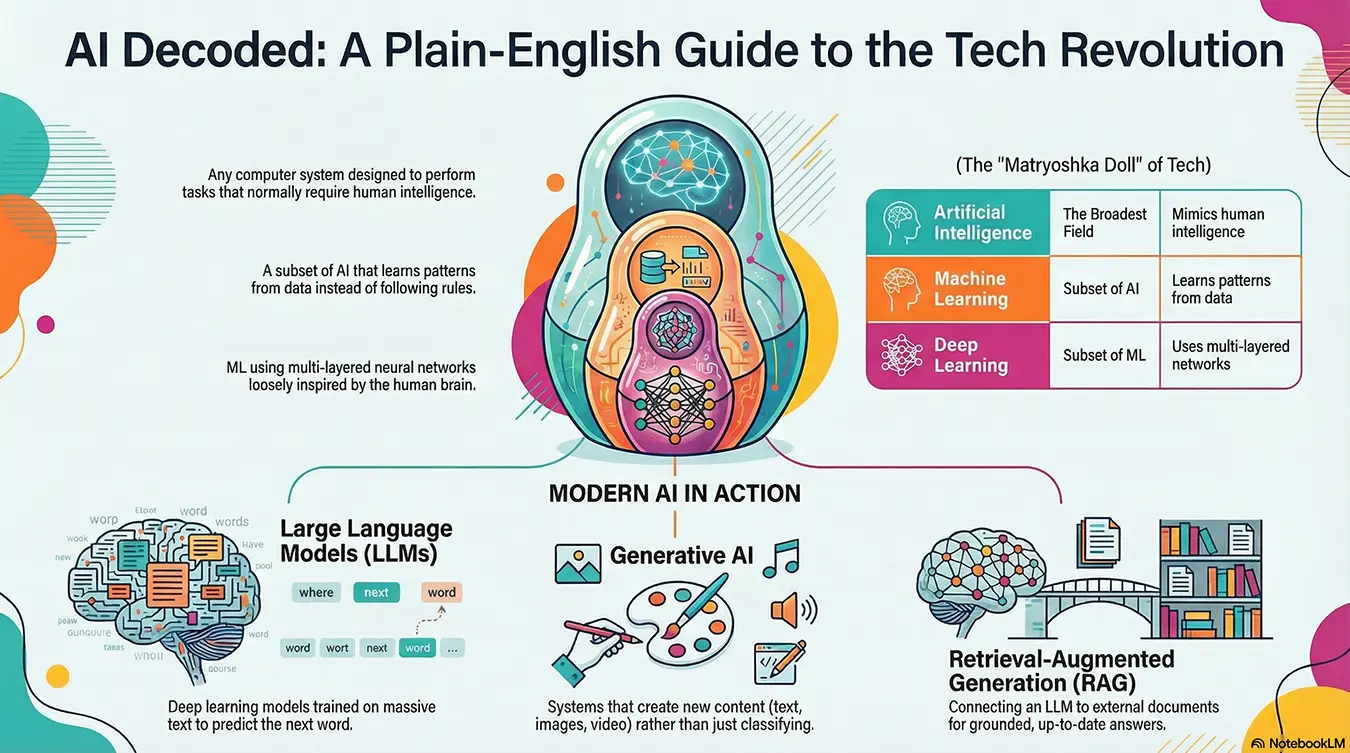

The Big Picture: What Is Artificial Intelligence (AI)?

Artificial intelligence is the broadest term on this list. It refers to any computer system designed to perform tasks that would normally require human intelligence. That includes recognising speech, making decisions, translating languages, writing text, or identifying objects in an image.

AI is the umbrella. Everything else in this article sits underneath it.

The term has been around since the 1950s, but it became a household word after tools like ChatGPT, Google Gemini, and Midjourney brought it into everyday life. When most people say "AI" today, they usually mean a specific type called generative AI, but we'll get to that.

Machine Learning (ML): How AI Actually Gets Smart

Most AI systems don't work by following a list of rules written by a programmer. Instead, they learn from data. That process is called machine learning.

Here's the key idea: instead of telling a system exactly what to do in every situation, you show it thousands (or millions) of examples and let it figure out the patterns itself.

A classic example is spam filtering. You don't manually write rules for every possible spam email. You feed the system millions of real emails, labelled "spam" or "not spam," and it learns to spot the difference on its own.

Machine learning is a subset of AI. All machine learning is AI, but not all AI is machine learning. Some older AI systems did use hard-coded rules. ML replaced most of those because it scales far better.

Deep Learning: ML With More Layers

Deep learning is a type of machine learning that uses structures loosely inspired by the human brain, called neural networks. The "deep" part refers to the many layers these networks have, each one processing the data in a slightly different way before passing it on.

Deep learning is what made modern AI genuinely powerful. It's the technology behind image recognition, voice assistants, real-time translation, and almost every impressive AI demo you've seen in the last decade.

To summarise the hierarchy so far:

- AI is the broad field

- Machine learning is one approach within AI

- Deep learning is one technique within machine learning

Neural Networks: The Engine Under the Hood

A neural network is the mathematical structure that deep learning is built on. It's made up of layers of interconnected nodes (loosely analogous to neurons in a brain) that process and transform data as it passes through.

You don't need to understand how they work mathematically to understand AI, but it helps to know they exist. When you hear terms like "layers," "weights," or "parameters," they're all referring to parts of a neural network.

Large Language Models (LLMs): The Tech Behind ChatGPT

This is probably the term you hear most right now. A large language model is a type of deep learning model trained on enormous amounts of text. Its job is to predict what words should come next in a sequence, and through doing that at massive scale, it develops a surprisingly broad ability to understand and generate human language.

The "large" part refers to the number of parameters - the internal settings the model adjusts during training. Modern LLMs have hundreds of billions of parameters.

Examples of LLMs include:

- GPT-4 / GPT-4o (OpenAI, powers ChatGPT)

- Claude (Anthropic)

- Gemini (Google)

- Llama (Meta)

LLMs can write, summarise, translate, answer questions, write code, and hold conversations. They're the backbone of most AI tools you encounter day-to-day. You can compare Claude, OpenAI and Gemini side by side using the LLM Chat tool.

Generative AI: AI That Creates Things

Generative AI refers to AI systems that produce new content - whether that's text, images, audio, video, or code.

LLMs are a type of generative AI focused on text. But generative AI also includes:

- Image generators like Midjourney, DALL-E, and Stable Diffusion

- Video generators like Sora and Veo

- Music generators like Suno and Udio

- Code generators like GitHub Copilot

The defining feature is that these systems don't just classify or retrieve - they create something new based on a prompt. If you want to try generating text content yourself, the AI Copyeditor and Content Repurposer are good starting points.

Natural Language Processing (NLP): Teaching Machines to Understand Language

Natural language processing is the field of AI concerned with helping computers understand, interpret, and generate human language.

It's an older term that predates the LLM era. Before large language models existed, NLP covered everything from basic spell-checkers and sentiment analysis tools to early chatbots and search algorithms.

Today, LLMs have absorbed most of what NLP used to handle and taken it much further. But NLP remains the correct umbrella term for the broader discipline. If a researcher is working on language-related AI problems, they're working in NLP.

Foundation Models: The Base Layer

A foundation model is a large model trained on broad data that can be adapted for many different tasks. LLMs are a type of foundation model, but the term also covers models trained on images, audio, and other data types.

The idea is that you train one large, general-purpose model at great expense, and then use it as a starting point for many specific applications. This is more efficient than building a new model from scratch for every use case.

Fine-Tuning: Specialising a General Model

Fine-tuning is the process of taking a foundation model and training it further on a specific, smaller dataset to make it better at a particular task.

A general LLM might be fine-tuned on legal documents to make it better at legal drafting, or on customer service conversations to make it a better support agent. The original model's broad knowledge stays intact, but it gains specialist ability in a particular domain. For a broader look at how these models are being applied in practice right now, see AI in 2026: How LLMs Are Reshaping Search, Content and Work.

RAG (Retrieval-Augmented Generation): Giving AI Access to Current Information

LLMs are trained on data up to a certain date. After that, they don't automatically know about new events, internal documents, or proprietary data.

Retrieval-augmented generation (RAG) solves this by connecting an LLM to an external knowledge source. When you ask a question, the system retrieves relevant documents from that source first, then passes them to the LLM along with your question. The model uses those documents to generate a grounded, accurate answer.

RAG is widely used in enterprise AI tools because it lets businesses plug their own data into an AI system without retraining the whole model. Search tools like Perplexity use RAG to provide cited, up-to-date answers - which is why they can reference recent news where a standard LLM cannot.

Hallucination: When AI Makes Things Up

Hallucination is what happens when an AI generates confident-sounding information that is simply wrong. It might cite a paper that doesn't exist, give you a fake statistic, or describe events that never happened.

It's one of the most important limitations of current LLMs to understand. They don't "know" things the way humans do. They generate text that is statistically likely to follow from what came before. Sometimes that produces brilliant results. Sometimes it produces plausible-sounding nonsense.

This is why human oversight still matters - and why RAG has become popular: grounding the model in real documents reduces the chance it invents an answer.

Tokens: How AI Models Read Text

LLMs don't process text word by word. They break it into chunks called tokens, which can be a word, part of a word, punctuation, or even a space.

The word "unbelievable" might become two or three tokens. A short sentence might be 10 tokens. This matters because LLMs have a context window - a limit on how many tokens they can process at once. Think of it as the model's working memory. Longer context windows mean the model can handle longer documents or conversations without losing track.

Prompt and Prompt Engineering: How You Talk to AI

A prompt is the input you give to an AI model - the question, instruction, or text you provide to get a response.

Prompt engineering is the practice of crafting prompts deliberately to get better outputs. This might involve giving the model a role to play, providing examples of the format you want, specifying constraints, or breaking a complex task into steps.

It sounds simple, but skilled prompt engineering can dramatically improve the quality of what you get back from an AI system. The AI Copyeditor on this site is a good example of structured prompting in action - it uses a carefully engineered system prompt to produce consistent editing outputs rather than generic rewrites.

AGI: The Goal That Doesn't Exist Yet

Artificial general intelligence (AGI) refers to a hypothetical AI system capable of performing any intellectual task a human can, at human level or above, across all domains.

Current AI systems - including the most capable LLMs - are narrow AI. They're very good at specific things (language, image generation, coding) but they don't have general reasoning, consciousness, or the ability to learn an entirely new skill from scratch the way humans do.

AGI remains an open research goal. Organisations like OpenAI and Anthropic are explicitly working towards it, with safety research running alongside capability work. Depending on who you ask, it's anywhere from five years to fifty years away, or possibly never. You'll see it discussed a lot, but no one has built it yet.

Quick Reference: All the Terms at a Glance

| Term | What It Means |

|---|---|

| AI | Artificial intelligence. The broad field covering any computer system that mimics human intelligence. |

| Machine Learning (ML) | A type of AI that learns from data rather than following pre-written rules. |

| Deep Learning | A type of ML using multi-layered neural networks. Powers most modern AI. |

| Neural Network | The mathematical structure deep learning is built on. |

| LLM | Large language model. A deep learning model trained on huge amounts of text to understand and generate language. |

| Generative AI | AI that creates new content: text, images, video, audio, or code. |

| NLP | Natural language processing. The field focused on making computers understand human language. |

| Foundation Model | A large, general-purpose model used as a base for many applications. |

| Fine-Tuning | Training a foundation model further on specific data to specialise it. |

| RAG | Retrieval-augmented generation. Connecting an LLM to external documents for grounded, up-to-date answers. |

| Hallucination | When an AI generates confident but factually wrong information. |

| Token | A chunk of text that LLMs process. Words, parts of words, or punctuation. |

| Prompt | The input you give to an AI model. |

| Prompt Engineering | The practice of crafting prompts to get better AI outputs. |

| AGI | Artificial general intelligence. A hypothetical future AI with human-level ability across all tasks. |

Understanding these terms won't make you an AI engineer, but it will help you cut through the hype, ask better questions, and make smarter decisions about where AI can actually help you.